Is Virgin Media traffic shaping protocol 41 (6in4 IPv6)?

This is a question that has come up a few times in the past. Customers of Virgin Media in the UK on it’s residential broadband have questioned if Virgin Media is traffic shaping protocol 41, the important protocol behind 6in4 IPv6 tunnels, provided by the likes of Hurricane Electric. Virgin Media have consistently denied any shaping of any kind is occurring, but I’m not convinced there isn’t something going on in their network. So I decided to dig a little deeper.

Virgin Media doesn’t “filter” protocol 41, so they aren’t blocking it, we know that for sure as you can run 6in4 tunnels on a Virgin Media connection.

Across both the Virgin Media and Hurricane Electric community forums, there have been posts about this topic over the years, with other Virgin Media customers showing their speed tests with similar stories, clearly showing the performance hit 6in4 IPv6 has compared to native IPv4. Here’s some of those stories:

- https://community.virginmedia.com/t5/Speed/traffic-shaping-for-protocol-41/td-p/4023667

- https://forums.he.net/index.php?topic=3990.0

- https://community.virginmedia.com/t5/QuickStart-set-up-and/IPv6-support-on-Virgin-media/td-p/35748/page/82

You could argue this could be due to the overhead of 6in4 overall, but I don’t think it is. Several people have been able to show a 6in4 tunnel on other broadband providers i.e. Sky and also deploy them on other connections and sustain much faster speeds, hinting Virgin Media is the problem.

Testing something like this relies on being able to test the same tunnel endpoint from a completely separate provider, that has no ties to Virgin Media’s network to get the other side of the picture.

I happen to have a VPS hosted by Linode in London which can serve as “another ISP”. I will configure another 6in4 tunnel using the same IPv4 tunnel endpoint that I’m using on my Virgin Media connection, to see where the performance bottleneck might be.

Setting up the test

Because my Linode VPS already has native IPv6, I have made some changes to the typical default configuration. Mainly not wanting the default IPv6 gateway to be Hurricane Electric, so I am overriding the default routes, with a separate route command with a numeric metric value that’s greater than the default IPv6 route from Linode, so it’s not used by default. This allows me to have the tunnel configured, but without sending all IPv6 traffic through it, which is what I want.

My Linode VPS has two network interfaces:

- eth0 — Primary network interface with native IPv6

- he-ipv6 — Sit tunnel interface, default IPv6 gateway overridden

I’m not using the /48 or /64 routed prefixes as I don’t need them for this purpose, just the basic configuration to have the Hurricane Electric tunnel configured for traffic to go through HE.net. The only IPv6 address I need for this is the Client IPv6 address on my side of the tunnel.

The tunnel endpoint on my Virgin Media connection and Linode VPS is 216.66.80.26 (London, UK). I believe in more recent years Hurricane Electric added additional London UK servers to the tunnel endpoints list and this is no longer the default selected endpoint when detecting UK based geolocation (it wasn’t for me anyway), however as I have had my tunnel configured for a number of years, it is under the older endpoint.

Testing methodology

A lot of the tests carried out by other Virgin Media customers were mainly IPv6 HTTP speed tests in a web browser and traceroute analysis. HTTP speed test websites in a browser aren’t really going to be possible on this VPS for two reasons:

- Because my Hurricane Electric tunnel will not be the default interface, any IPv6 enabled speed test website will not pickup the tunnel.

- IPv6 speed tests can be quite wild and inconsistent based on previous experience with 6in4, so I’m going to avoid them for the test.

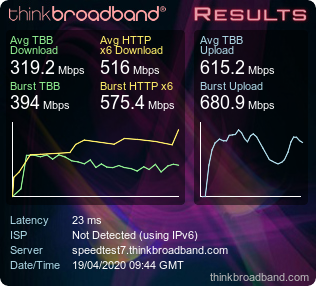

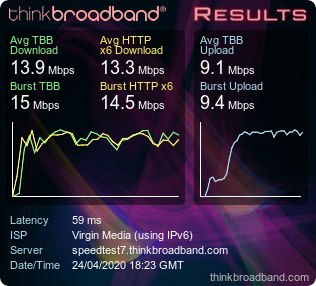

I did use the HTTP speed test from thinkbroadband.com, as it’s IPv6 enabled just to get a baseline of the native IPv6 performance on the Linode VPS, so I know what bandwidth is available, but that’s also going to be fluctuating from time to time, but as you can see below, plenty to play with.

However for the test itself, what I elected to do was to download a 1 GB test file from thinkbroadband.com over IPv6, this is a consistent and repeatable test, which should be a good alternative. For this I used cURL as I can specify the specific protocol IPv4 vs IPv6 and also bind the stream to a specific network interface to ensure traffic is going through the right place.

Testing the 6in4 IPv6 performance

Here are the results of the 1GB download test comparing the Linode VPS and my Virgin Media connection with 6in4 IPv6 deployed.

1 GB test file Linode VPS (Native IPv6):

[root@web ~]# curl -6 --interface eth0 http://ipv6.download.thinkbroadband.com/1GB.zip -o tb_1GB.zip

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1024M 100 1024M 0 0 64.4M 0 0:00:15 0:00:15 --:--:-- 63.1M1 GB test file Linode VPS (HE.net 6in4 IPv6):

Was actually slightly faster?!

[root@web ~]# curl -6 --interface he-ipv6 http://ipv6.download.thinkbroadband.com/1GB.zip -o tb_1GB.zip

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1024M 100 1024M 0 0 77.3M 0 0:00:13 0:00:13 --:--:-- 87.3MFor testing my Virgin Media connection, to be as fair as possible, the tests have been directly run on my Linksys WRT3200ACM which running OpenWrt. My Super Hub 3 is in modem mode. Here I am using the same cURL test, but sending the output straight to /dev/null to not fill up the overlay filesystem.

1 GB test file Virgin Media (Native IPv4):

root@linksys-wrt3200acm:~# curl -4 http://ipv4.download.thinkbroadband.com/1GB.zip -o /dev/null

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1024M 100 1024M 0 0 13.1M 0 0:01:17 0:01:17 --:--:-- 13.2M1 GB test file Virgin Media (HE.net 6in4 IPv6):

root@linksys-wrt3200acm:~# curl -6 http://ipv6.download.thinkbroadband.com/1GB.zip -o /dev/null

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1024M 100 1024M 0 0 1587k 0 0:11:00 0:11:00 --:--:-- 1657kRoughly speaking the download speed with 6in4 IPv6 is only about 16% of the bandwidth available. That’s a massive 84% speed loss on download. My true line speed is 100/10 and hovers around 100–110 Mbps down and 10–12 Mbps up due to Virgin Media and it’s over-provisioning practices. The 6in4 IPv6 performance seems capped at around 15 Mbps download, although because of my overall upload speed, seeing 10 Mbps for upload with 6in4 would be normal, the download speed however seems to be heavily capped, which is consistent with other reports. I’ve seen others report topping out at about 20 Mbps and not able to go any further.

Bonus IPv6 test with Mullvad

More recently I configured a Wireguard client connecting to Mullvad (which has IPv6 support) because I was curious about the outcome. With Mullvad their IPv6 is using NAT66, so you only get a single /128 ULA address to use on the client side, but it’s enough to test IPv6 here. Here’s the same download test using Mullvad with Wireguard on the same router, same connection, same test, just a different protocol (IPv6 over UDP).

root@linksys-wrt3200acm:/tmp# curl -6 --interface wg http://speedtest.tele2.net/1GB.zip -o /dev/null

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 1024M 100 1024M 0 0 11.5M 0 0:01:28 0:01:28 --:--:-- 11.2Outcome: The average speed is a little slower than the IPv4 test, but it is very close considering the overhead and the performance is likely to vary a bit given it is a VPN. The key point to take away is Mullvad IPv6 provides much better speeds than 6in4 and I don’t think you can simply argue it’s because it’s “native IPv6”.

iperf3 tests

Using iperf3 the story is also quite clear. Here I used a public iperf server to test both IPv4 and IPv6 with reverse mode.

Virgin Media IPv4 test:

My Virgin Media IPv4 has been obscured as 82.xx.xx.xxx.

Connecting to host bouygues.iperf.fr, port 5201

Reverse mode, remote host bouygues.iperf.fr is sending

[ 5] local 82.xx.xx.xxx port 38146 connected to 89.84.1.222 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 11.5 MBytes 96.8 Mbits/sec

[ 5] 1.00-2.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 2.00-3.00 sec 12.7 MBytes 106 Mbits/sec

[ 5] 3.00-4.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 4.00-5.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 5.00-6.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 6.00-7.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 7.00-8.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 8.00-9.00 sec 13.3 MBytes 112 Mbits/sec

[ 5] 9.00-10.00 sec 13.3 MBytes 112 Mbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.05 sec 139 MBytes 116 Mbits/sec 4 sender

[ 5] 0.00-10.00 sec 131 MBytes 110 Mbits/sec receiveriperf Done.

Virgin Media Hurricane Electric 6in4 IPv6 test:

I am testing 6in4 from my router directly, so my IPv6 will appear as the Client IPv6 address on my side of the tunnel, rather than having an IPv6 address from my routed /48 prefix on a LAN client.

Connecting to host bouygues.iperf.fr, port 5201

Reverse mode, remote host bouygues.iperf.fr is sending

[ 5] local 2001:470:xxxx:xx::2 port 35558 connected to 2001:860:deff:1000::2 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 1.62 MBytes 13.6 Mbits/sec

[ 5] 1.00-2.00 sec 1.44 MBytes 12.1 Mbits/sec

[ 5] 2.00-3.00 sec 1.93 MBytes 16.2 Mbits/sec

[ 5] 3.00-4.00 sec 1.42 MBytes 11.9 Mbits/sec

[ 5] 4.00-5.00 sec 1.79 MBytes 15.0 Mbits/sec

[ 5] 5.00-6.00 sec 1.56 MBytes 13.1 Mbits/sec

[ 5] 6.00-7.00 sec 1.82 MBytes 15.2 Mbits/sec

[ 5] 7.00-8.00 sec 1.30 MBytes 10.9 Mbits/sec

[ 5] 8.00-9.00 sec 1.52 MBytes 12.8 Mbits/sec

[ 5] 9.00-10.00 sec 1.31 MBytes 11.0 Mbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.08 sec 16.9 MBytes 14.1 Mbits/sec 58 sender

[ 5] 0.00-10.00 sec 15.7 MBytes 13.2 Mbits/sec receiveriperf Done.

Similar to the cURL download test, the maximum bitrate peaked at around 16.2 Mbits/sec hinting of some form of bottleneck or limit being imposed.

If we bind iperf3 to the Wireguard interface with IPv6 and repeat the same test. We get a very different result.

Reverse mode, remote host bouygues.iperf.fr is sending

[ 5] local fc00:bbbb:bbbb:bb01::1:611c port 34967 connected to 2001:860:deff:1000::2 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 8.16 MBytes 68.5 Mbits/sec

[ 5] 1.00-2.00 sec 12.1 MBytes 101 Mbits/sec

[ 5] 2.00-3.00 sec 12.1 MBytes 101 Mbits/sec

[ 5] 3.00-4.00 sec 12.5 MBytes 105 Mbits/sec

[ 5] 4.00-5.00 sec 12.6 MBytes 105 Mbits/sec

[ 5] 5.00-6.00 sec 12.5 MBytes 105 Mbits/sec

[ 5] 6.00-7.00 sec 12.5 MBytes 105 Mbits/sec

[ 5] 7.00-8.00 sec 12.5 MBytes 105 Mbits/sec

[ 5] 8.00-9.00 sec 12.5 MBytes 105 Mbits/sec

[ 5] 9.00-10.00 sec 11.6 MBytes 97.1 Mbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.06 sec 124 MBytes 104 Mbits/sec 2 sender

[ 5] 0.00-10.00 sec 119 MBytes 100 Mbits/sec receiveriperf Done.

The Linode VPS is obviously faster than the average Virgin Media connection given the backbone it’s connected to, but the download tests shows the possible sustained speeds over 6in4 outside of Virgin Media, ruling out that the HE tunnel endpoint itself being capped or limited in some way. Of course, the overall performance of the HE.net IPv6 tunnel may vary at any given time, after all it’s a free service with no SLA or guarantees.

By throwing in IPv6 from Mullvad using Wireguard, this shows that IPv6 over UDP can pretty much saturate my full line speed also, which suggests that we now have found evidence of something being done specifically to 6in4 on Virgin Media. What that is however, is still unknown.

Possible theories on what could be happening

Because Virgin Media have consistently denied there is no traffic management of protocol 41 going on, there is no mention of this in their official traffic management policy or any mention of IPv6 tunnels specifically, we have to look at other potential possibilities.

- Traffic prioritisation. Could Virgin Media be prioritising certain traffic over other protocols? Example, DNS, VoIP, gaming etc could all have a higher priority over other traffic being latency sensitive and therefore being allocated more resources, compared other protocols like protocol 41/6in4 which may fall under the lower priority and hence not able to have full bandwidth. This wouldn’t be classed as shaping necessarily. This could be seen as a more of a QoS (Quality of Service) scenario, where you reserve higher speeds for certain types of traffic while leaving others to use a scavenger/lower class, which is restricted to a certain amount of bandwidth and not able to utilise all available resources on various links.

- The Super Hub 3. It’s well known within the Virgin Media community that the Super Hub 3 (The CPE router/modem provided by Virgin Media) suffers from latency and performance issues because of the bugs within the Puma 6 SoC. Intel even officially acknowledged the issues with an advisory, it’s possible that 6in4 is being hampered by these issues in the SoC, but in my own personal experience I have experienced the same performance issues on the first version of the Super Hub (you know, the one that doesn’t do dual band wireless) in modem mode. So I’m not sure the Super Hub 3 is entirely the problem but it certainly won’t be helping either.

- Are Hurricane Electric throttling tunnels based on their usage? After all, it is a free service right? Well, they aren’t. I received an official acknowledgement of this from one of their engineers. The only policies in place are filtering SMTP and IRC to prevent abuse, but otherwise there is no shaping or traffic management occurring.

- Is my tunnel on my Virgin Media connection misconfigured? My router is running OpenWrt and have my IPv6 tunnel on the wan6 interface where the /48 prefix is delegated across my LAN (br-lan), as far as I know, I have followed the configuration recommended by documentation from OpenWrt and other sources and it is working correctly. 10/10 on test-ipv6.com etc. The default MTU when configuring 6in4 with OpenWrt is 1280, which is a little low, so increasing this to 1480, may help a bit (if not already set), but it isn’t a magic bullet.

- Virgin Media are traffic shaping but either the forum staff who have previously stated they aren’t are either generally not aware or being deceptive about it. I really hope it isn’t the latter, as that’s pretty shady if it’s the case. Really, only Virgin Media’s network/engineering team would be able to confirm it, but I don’t think we’ll get a response from someone in that part of the company officially in the public domain. There’s also the chance that there is a disconnect (ha, get it?!) between the forum staff and other parts of the company e.g. if the request didn’t get to the right department from within Virgin Media to answer the question.

The official line that seems to be coming from Virgin Media is because this is standard residential broadband, Virgin Media make no assurances regarding the use of tunnels or VPN connections in terms of speed/performance. Nice cop out. It is interesting however as more recently, it has also been confirmed that the same issue happens on Virgin Media Business connections as well and there is equally no real answer from that side either.

My personal thoughts on the issue

Based on my findings and looking at this issue it shows that this seems to be specific to 6in4 traffic. Encapsulating 6in4 itself over UDP doesn’t seem to have the same performance problems. So the problem appears to be when the Client IPv4 Address of the tunnel is pointing to a Virgin Media IP address and it’s 6in4 traffic specifically. The obvious conclusion would suggest a shaping issue or how this traffic is being treated within the Virgin Media core network, but there could also be evidence to suggests it is actually a CPE problem.

More recently an article appeared on root.cz in the Czech Republic talking about similar issues with IPv6 tunnels with Compal UPC/Vodafone. Despite being several thousand miles away, the exact problem that happens on Virgin Media seems to be a carbon copy in an entirely different network. The one common factor that ties these two networks together? The CPE. It appears Compal UPC/Vodafone use very similar modems from Arris, the same supplier of modems to Virgin Media since the Super Hub 3. Hardware wise the same Intel Puma 6 SoC is in use in both networks and by now the issues with this SoC are well known from both performance and latency related factors.

Could it be the CPE all along? It’s an interesting theory. It’s possible, but my own experience with running IPv6 tunnels on the original Super Hub (made by Netgear) also had the same issue, but there could be a potential hardware issue if the CPU in all of the different modems Virgin Media have is unable to process and route 6in4 traffic quick enough. To a point, 6in4 does have some CPU requirements, so it’s not impossible to be a factor. A lot of these modems will only be tested for certain environments and conditions, outside of TCP/UDP they very well could be not optimised for other traffic, but that is just a theory.

I have also heard small chatter that the Virgin Media Hub4 used on the Gig1 package (and possibly non Gig1 packages if you are in a DOCSIS 3.1 enabled area) seems to improve IPv6 tunnel performance. While this modem still uses the Intel Puma SoC it is the 7 series chipset not the infamous 6 series. Verifying this claim is difficult for myself currently, as my area is not DOCSIS 3.1 yet and getting a hold of a Hub4 outside of this, doesn’t seem possible currently (Even though it should work under DOCSIS 3.0). If anyone happens to have a Hub4 in modem mode and either is using or knows how to setup a IPv6 tunnel, please get in touch!

The real solution

Of course the answer will always be native IPv6. Something which Virgin Media have yet to deploy, despite a lot of the more technical customers on Virgin Media being vocal about it (I’m one of them!). What is known about Virgin Media and it’s IPv6 plans is they are likely to use DS-Lite, which I have a whole other article about.

Do you use a 6in4 IPv6 tunnel on a Virgin Media connection and experience poor performance? If so, I’d love to hear from others about this!

Update 19/04/2020: Hurricane Electric confirmed that there is no traffic shaping occurring on the tunnelbroker.net service in any form, the only policies in place are filtering SMTP and IRC to prevent abuse. I did not expect there to be any shaping, but I thought I’d confirm it to be accurate.

Update 25/04/2020: I have spoken to Virgin Media about the issues described here. It is being referred to their network side for additional comments related to the performance issues with 6in4. Given the current situation with COVID-19 this is likely to take some time, which is completely understandable. The likelihood of Virgin Media customers using IPv6 tunnels is probably going to be a very small portion of the overall customer base, therefore something like this would be considered quite a niche use case.

Update 16/06/2020: Added an additional IPv6 test using Mullvad for further insight.

Update 20/06/2020: Updated the 1GB download tests on my Virgin Media connection to be done directly on the router so there are minimal devices in the path.

Update 29/07/2020: Additional iperf3 test with Wireguard IPv6 and added information from root.cz with a similar story around IPv6 tunnel issues.

Update 06/08/2020: Virgin Media now appear to have finally acknowledged this issue! If has been reported by ISP Preview that the matter is officially being looked into!